The Weight of the Red Dot

The smallest sliver of people are making design choices right now that determine capability or fragility for everyone else. Does the thing you're building make the people around it more capable?

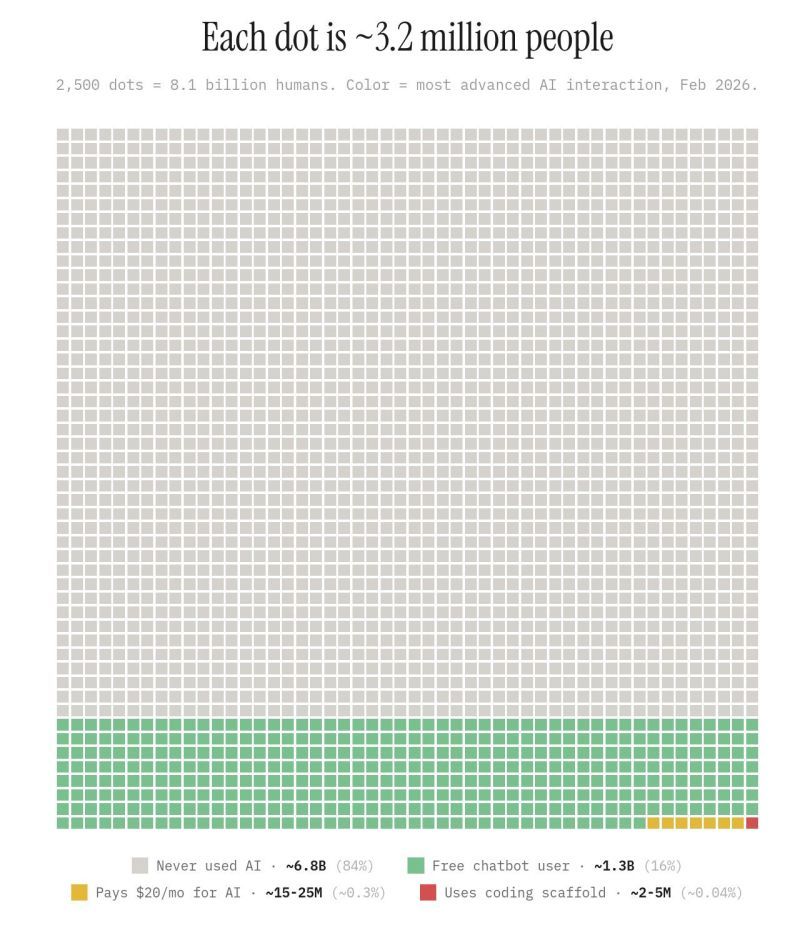

You may have seen this chart circulating, especially if you're a red dot. Each dot represents roughly 3.2 million people. The gray ones, the overwhelming majority, haven't knowingly used AI. The colored dots at the bottom have. Green and orange are building basic fluency. The red dots, the smallest sliver, are building with AI tools daily, shaping how they work, what they produce, and who they serve.

Most people look at this and see an adoption curve. Early adopters at the bottom, mainstream to follow, the usual S-curve story.

That's not what this chart shows.

This is a map of who currently stands between AI capability and organizational reality. The colored dots are making live decisions, right now, about what gets automated, what stays human, what gets built, and for whom. Every one of those decisions compounds. Most of them are being made on autopilot.

And the work those decisions produce? Overwhelmingly, it is replication. Drafting emails faster. Summarizing summaries. Producing content that probably shouldn't exist at all. When the printing press arrived, the first instinct was to print copies of manuscripts that already existed. That act transformed the delivery of information. It did not create new knowledge. The new knowledge came later, from what happened when that information hit new hands at new scale. We are in the manuscript-copying phase and mistaking that usage for revolution.

Transformation starts when the people using the tools stop asking "how do I do this faster?" and start asking "should this be done at all?" Not "how do I implement AI?" but "what would I build if I started from what's actually possible?"

Very few are asking those questions yet.

Here's what concerns me about the default path. Most AI deployments today are designed to remove work from people. That sounds like progress. But what gets removed isn't just labor. It's contact with the thing itself. The understanding of why decisions get made the way they do, where the edge cases live, what the model doesn't see. Automate the task far enough and you lose the ability to evaluate whether the task was done right. Automate further and you lose the ability to evaluate whether it even matters.

Scale that across an organization and a pattern emerges that should worry anyone paying attention. Roles eliminated. Positions unfilled. Layers of judgment replaced with automated confidence. No single automation decision was necessarily wrong. But the accumulation of those decisions produces an organization that is moving faster and seeing less. By the time conditions shift and the mismatch surfaces, the people who would have noticed are gone. They were optimized out in service of last quarter's KPIs.

The data confirms this. Ninety percent of companies running AI pilots haven't been able to scale them. Production systems are failing because nobody built the safeguards. Change management is, by the admission of the firms doing the work, more than a year behind the tools they're selling. These aren't growing pains. These are the predictable symptoms of deployment without understanding.

So what does this mean for the people in the colored dots? You are making the design choices that determine which direction this goes for all those gray ones. Not in some abstract future. In the systems you're building this week and the processes you are automating today.

The distinction that matters isn't between good uses and bad uses. It's between deployments that leave an organization more capable of understanding what's emerging and deployments that leave an organization dependent on systems it can't evaluate. One compounds capability. The other compounds fragility. At the point of decision, they often look identical. That's what makes the choice so consequential and so easy to get wrong.

If you're a practitioner working with these tools, the question worth sitting with isn't whether you carry responsibility. You do. The question is more specific. Does the thing you're building make the people around it more capable of seeing what they need to see? Can they understand what the system is doing and why? When conditions change, will someone still be in position to notice?

If you can't answer those questions about the thing you shipped last week, that's the signal.

Your name is on this work. Make it count.